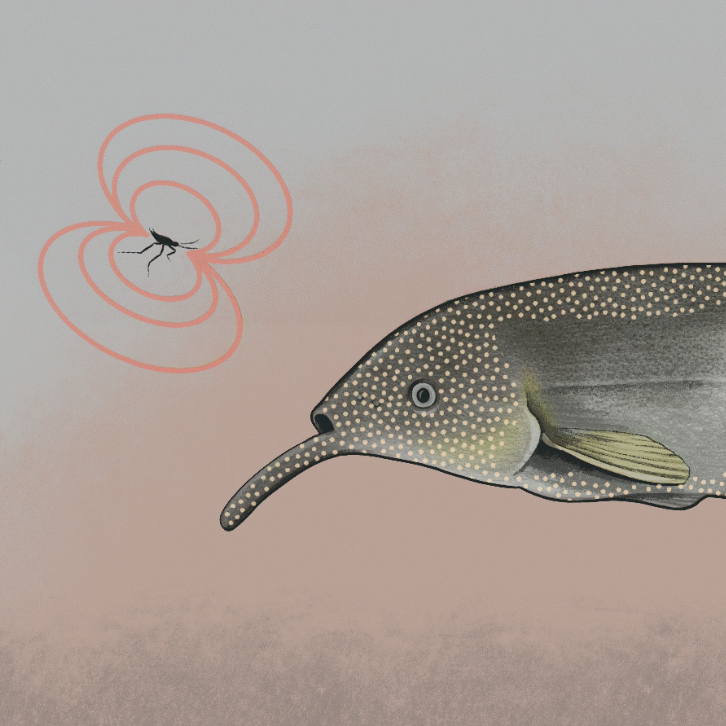

NEW YORK – When you run on a forest trail, you don’t believe that the trees, which appear to get bigger as you get closer to them, are rushing toward you. That’s because your brain predicts and corrects for how your own movement affects the visual input you receive from moment to moment. It turns out that the worldview of the elephantnose fish, silhouetted in the colorful maps of brain activity above, could help tease out how brains do this.

These electric fish navigate, communicate and track prey with organs specialized for generating and detecting electric fields. To survive, they need to distinguish changes in the fields they emit due to their own bodily movements from those associated with nearby plants, fish and the water fleas they eat.

“For the fish, electric fields are like an extension of their sense of touch,” says postdoctoral research scientist Avner Wallach, PhD, in the lab of Nathaniel Sawtell, PhD, at the Zuckerman Institute. “Variations in those fields help the fish identify what is happening in their environment.”

Dr. Wallach studied the fish swimming freely in a round tank and monitored brain activity within the creatures’ electrosensory lobe (ELL). This region integrates different types of inputs, such as motor signals from the tail and various sensory signals, to filter-out sensory noise. The data maps shown above summarize this filtering process as the information propagates from the ELL’s input (left) to output from two types of ELL neurons (middle and right), all observed and recorded by the researchers while the fish swam around an object.

The researchers expect that this work will help reveal how our brains integrate different sources of information to enable us to keep the world straight, regardless of how our running, shouting and other actions feed potentially confounding, self-generated signals into our brains.

To learn more, read the paper, “An Internal Model for Canceling Self-Generated Sensory Input in Freely Behaving Electric Fish,” by Avner Wallach, PhD, and Nathaniel Sawtell, PhD, published today in Neuron.